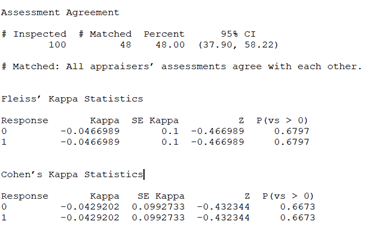

Kappa Value/ Kendall's Coefficient - We ask and you answer! The best answer wins! - Benchmark Six Sigma Forum

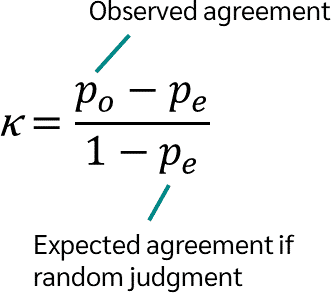

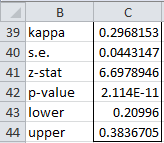

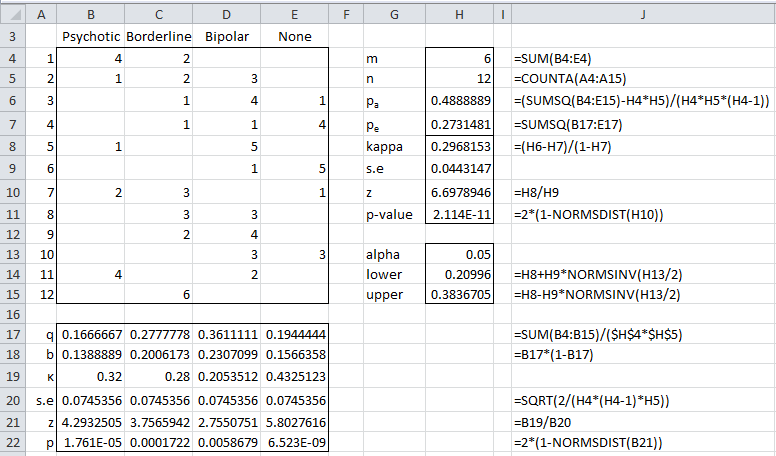

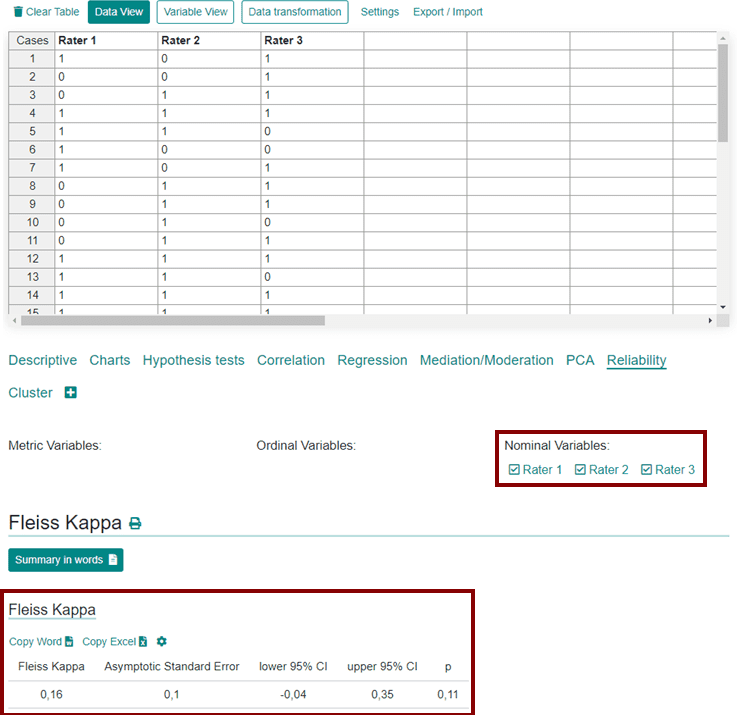

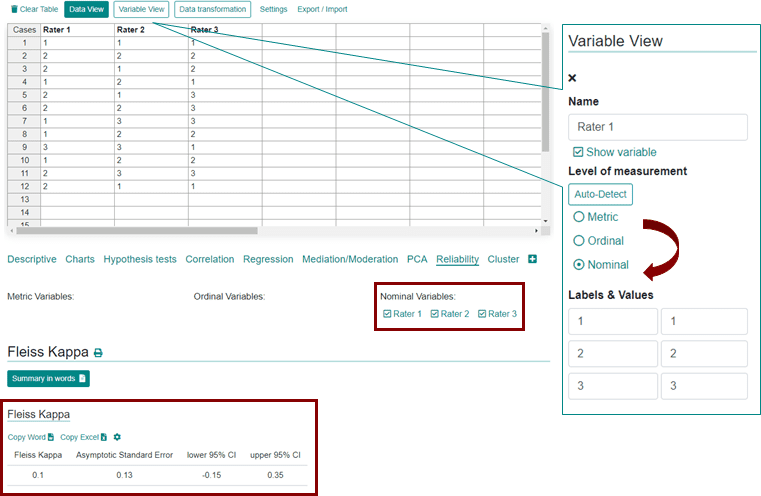

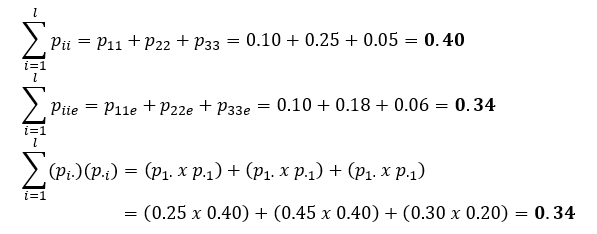

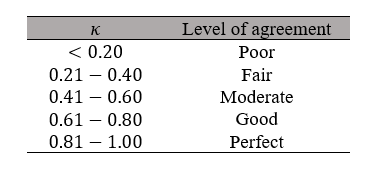

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

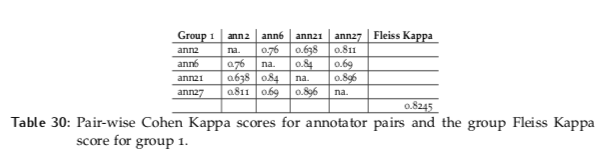

Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

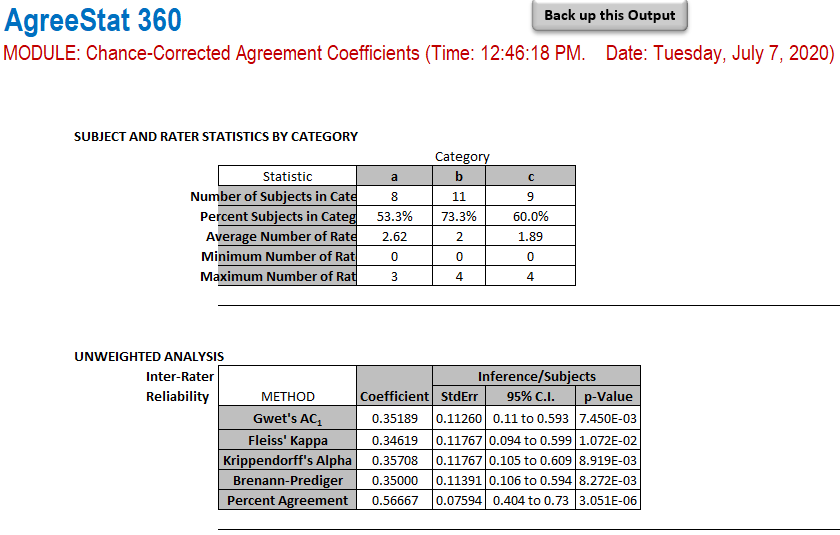

AgreeStat/360: computing agreement coefficients (Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) with ratings in the form of a distribution of raters by subject and category